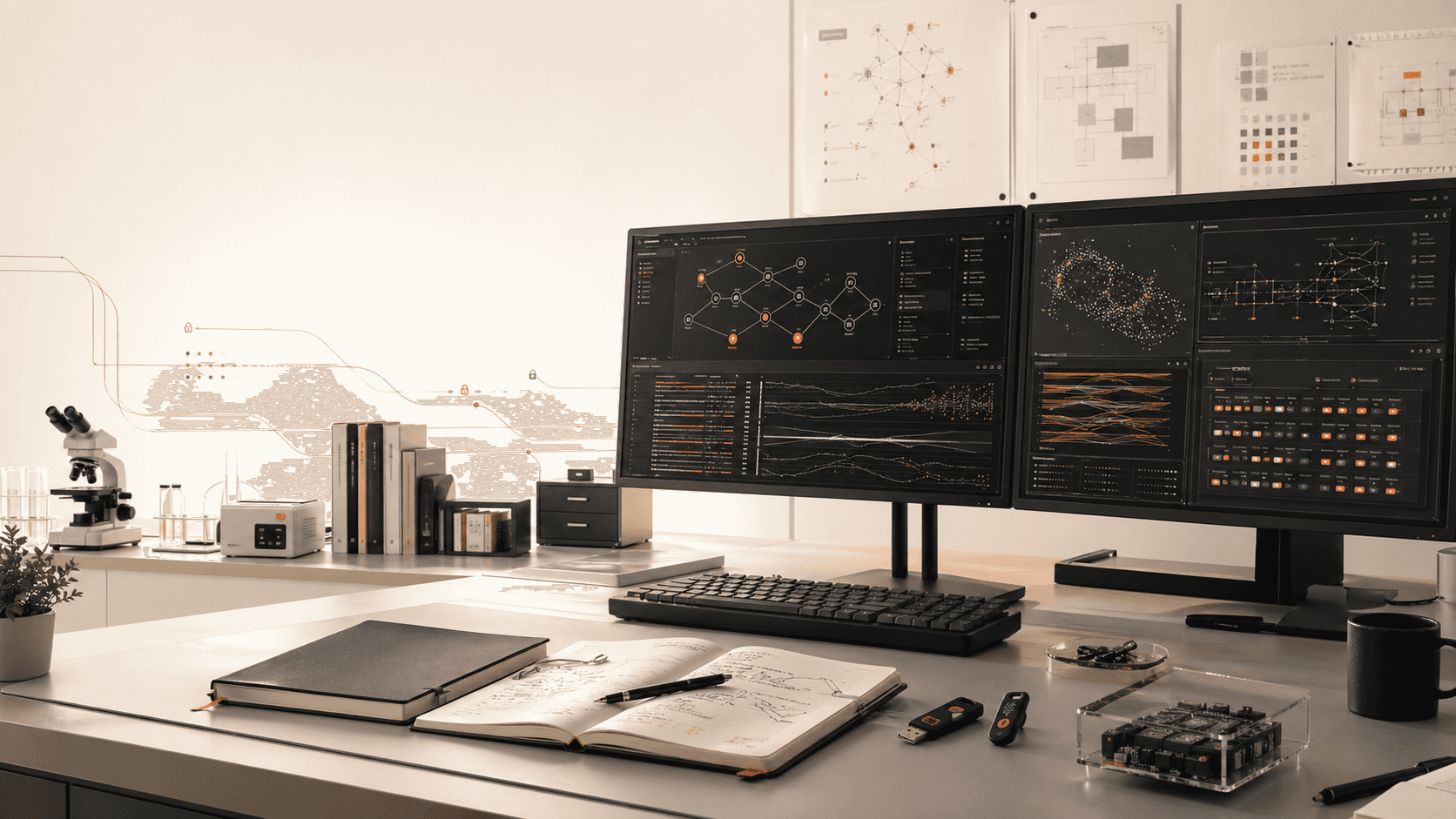

Agent workbenches

Interfaces for assigning work, following progress, reviewing evidence, and deciding what deserves human attention.

We build tools and methods for a world where agents do the work and humans do the thinking.

Agents grow more capable by the day, but our tools, workflows, and management practices are not keeping up. We are building new tools for you and for your agents.

Thesis

The surrounding system matters now: tools, workflows, review loops, permissions, management practices, and shared language for what good agent work looks like.

Aesoteric studies that system and turns the useful parts into software, methods, and public artifacts.

agent_run.create({

owner: "human judgment",

scope: "bounded production work",

exit: "evidence + decision"

})

method.ship({

tools: "observable",

review: "targeted",

safety: "designed into the workflow"

})

Tools

Agent-native software needs to help humans think clearly while giving agents the structure they need to act reliably.

Interfaces for assigning work, following progress, reviewing evidence, and deciding what deserves human attention.

Patterns for splitting goals into bounded agent work with clear ownership, constraints, and review points.

Methods for testing agent output against intent, quality, safety, reliability, and the cost of oversight.

Operational practices for systems where the work product may be generated, changed, and verified by agents.

Guardrails for permissions, secrets, data access, approvals, and reversibility when agents act on real systems.

Open-source tools, reference workflows, and field notes that make agent-native work easier to inspect and repeat.

Methodology

We care about methodology: how work is delegated, how correctness is established, how safety boundaries are maintained, and how humans stay responsible without becoming the bottleneck.

Start from the decision the human should make, then design the agent work around producing the right evidence for that decision.

Give agents enough room to work, but make the scope, tools, data, and exit criteria explicit before production work begins.

Capture the plan, actions, files, approvals, and verification trail so agent work can be audited without reading every line.

Measure whether the result is correct, durable, and safe to ship, then feed that learning back into the workflow.

Research questions

We work on the parts that become urgent when agents do meaningful production work: oversight, trust, management, verification, and the shape of the tools themselves.

Open source

Most of what we build is open source. That matters because agent methodology should be inspectable, reusable, and improved by the people doing the work.

Tools agents and humans can both use.

Repeatable workflows for agent-native production.

Research logs, decisions, and lessons from practice.

Talk to Aesoteric about tools, methods, research, or production workflows for a world where agents do more of the work.